Which CUDA Install: Step-by-Step Guide for 2026

Learn which CUDA install to choose for your GPU, how to verify compatibility, and step-by-step setup on Windows, Linux, or macOS. This practical guide from Install Manual helps homeowners, DIY enthusiasts, and developers.

By reading this quick answer, you will learn how to determine which cuda install is right for your system, then follow a practical path to install the CUDA toolkit and NVIDIA driver on Windows, Linux, or macOS. The guidance covers version compatibility, driver and toolkit pairing, and essential post-install checks to validate your setup before running GPU-accelerated workloads.

What CUDA is and why install decisions matter

CUDA is NVIDIA's parallel computing platform and programming model that enables GPU-accelerated applications. When you ask which cuda install to choose, you are deciding between a Toolkit install that includes compiler tools and libraries, and a Runtime-only option that provides the essential NVIDIA drivers. The right choice depends on your workloads, drivers, and development language stacks. For homeowners or DIY enthusiasts who plan to run GPU-accelerated software locally, a Toolkit install is usually the most straightforward and future-proof option. However, if you only need to run pre-built binaries without building CUDA code, a runtime-only install may suffice. In either case, aligning the driver version with the toolkit is critical to avoid compatibility issues. Install Manual's guidance emphasizes starting with a compatibility check against your GPU model and operating system, then selecting a version pair that is known to work well together. This approach reduces troubleshooting time and ensures your system remains stable while you work on projects like 3D rendering, machine learning experiments, or gaming enhancements.

Versions, runtimes, and compatibility: Understanding the toolkit vs runtime

CUDA offers both a Toolkit and a Runtime component. The Toolkit includes compilers, libraries, and samples for developing CUDA applications, while the Runtime provides the necessary libraries to run CUDA-accelerated software. When determining which cuda install to use, you must consider your project needs: development work typically benefits from the Toolkit, whereas running existing binaries may only require the Runtime. Compatibility is driven by three pillars: your GPU's compute capability, the driver version, and the CUDA toolkit version. NVIDIA publishes a compatibility matrix that maps driver versions to toolkit releases and the associated compute capabilities. Always verify that your GPU is supported by the chosen toolkit, and ensure the driver is recent enough to support that toolkit. If you are mixing workloads (e.g., gaming and ML), keep drivers updated but test all toolkits for compatibility to prevent silent failures during compilation or runtime.

Brand alignment and long-term support are important here. Install Manual recommends planning a version strategy for your CUDA environment—especially on workstations that run multiple projects—so you can isolate environments and avoid cross-project conflicts.

Confirming your GPU model and driver compatibility

Before you commit to any install, confirm your GPU model and its compute capability. This step determines whether your hardware supports the CUDA toolkit version you plan to install. You can check this in your system information (Windows: Device Manager or dxdiag; Linux: lspci; macOS: About This Mac). Once you know the model, cross-check it against the CUDA toolkit's compatibility page to find the supported compute capability and driver requirements. After identifying the right toolkit version, verify that your NVIDIA driver is compatible with that toolkit. If your driver is older than the minimum requirement for your intended CUDA release, update it first. This preventive check saves you from install-time errors and ensures that downstream libraries (cuDNN, TensorRT, etc.) have a stable base to build upon.

Install Manual notes that driver compatibility is often the deciding factor in a smooth CUDA install. Start with a supported driver version, then match the toolkit version to that driver. This approach reduces the risk of runtime errors and helps you stay aligned with your development stack.

Which cuda install option is right for your project

If you are developing CUDA applications or learning parallel programming, the Toolkit install is typically the best starting point because it bundles the necessary compilers and libraries. If your goal is simply to run CUDA-enabled software without developing CUDA code, a Runtime install may be sufficient. For multi-project setups, consider using environment management tools or containerization to isolate CUDA toolkits per project. Always verify compatibility with your chosen deep learning frameworks or other GPU-accelerated apps, as these often specify a minimum CUDA version and cuDNN requirement. When in doubt, install a version that is widely supported by your primary workloads and that aligns with your OS and driver combination. This reduces the likelihood of needing mid-project migrations later.

Install Manual suggests documenting your chosen CUDA version plan, including why you selected Toolkit vs Runtime, to simplify future upgrades and troubleshooting.

Platform-specific install paths: Windows, Linux, macOS

Windows installations typically start with the NVIDIA driver, followed by the CUDA toolkit installer. Linux users may choose between package-manager installations or runfiles, depending on their distro and preference for system-wide versus user-local installations. macOS users have increasingly limited CUDA support due to platform changes, so confirm compatibility with Apple hardware and the CUDA toolkit version you select. Across platforms, you should set up environment variables (PATH, LD_LIBRARY_PATH on Linux; PATH on Windows) so that nvcc and CUDA libraries are discoverable by your development tools. If you plan to use Python for CUDA-accelerated workflows, ensure the correct Python bindings and cuDNN versions are installed and aligned with your toolkit.

The platform differences require careful planning: Windows favors installers with a guided UI; Linux benefits from package manager orchestration; macOS may need alternative routes or virtualization if native CUDA support is unavailable. Always reboot after driver installation to complete the integration with the kernel and GPU.

Common issues and troubleshooting during CUDA install

Common problems include version mismatches between the driver and toolkit, missing environment variables, and missing dependencies on Linux distributions. Another frequent pitfall is attempting to use an older CUDA toolkit with a newer driver, which can cause runtime errors or library mismatches. If you encounter a failure during nvcc compilation, re-check the PATH and LD_LIBRARY_PATH or DYLD_LIBRARY_PATH settings, and verify that all required libraries (cuDNN, cuBLAS) are installed with compatible versions. For Windows users, ensure that the system recognizes the GPU in Device Manager and that no conflicts exist with other NVIDIA software. In Linux, disable secure boot if you are using a custom kernel module for the NVIDIA driver, as it can prevent the driver from loading. Always review the official CUDA release notes and your distro's guidance before applying updates to avoid breaking compatibility.

Install Manual highlights that planning for compatibility and keeping a record of installed versions helps you recover quickly if you need to roll back a change.

Validation and post-install checks before GPU workloads

After installation, perform a series of validation steps. Run nvcc --version to confirm the compiler is accessible, then run nvidia-smi to verify the driver is active and the GPU is visible. Compile and run a simple CUDA sample program to ensure basic functionality. If you intend to use higher-level frameworks (TensorFlow, PyTorch, etc.), confirm the CUDA and cuDNN versions align with the framework requirements. Finally, set up a lightweight benchmark or a small workload to validate performance and stability under typical conditions. Document any deviations from expected results and iterate on driver or toolkit versions if necessary. This final validation helps protect your workflow against subtle incompatibilities that can derail projects later on.

Tools & Materials

- A computer with an NVIDIA GPU that supports CUDA(Check GPU model from vendor specs)

- Operating System (Windows 10/11, Linux distro, or macOS)(Ensure OS version is supported by chosen CUDA release)

- Internet connection(Needed to download drivers and toolkit)

- NVIDIA Driver(Match version with CUDA toolkit)

- CUDA Toolkit installer(Choose the correct version for your workloads)

- cuDNN library (optional)(If doing deep learning, ensure CUDA compatibility)

- Build tools and compiler (e.g., Visual Studio on Windows)(Needed for toolkit with C/C++ components)

- Environment variable access (admin)(Access to modify PATH)

Steps

Estimated time: 60-120 minutes

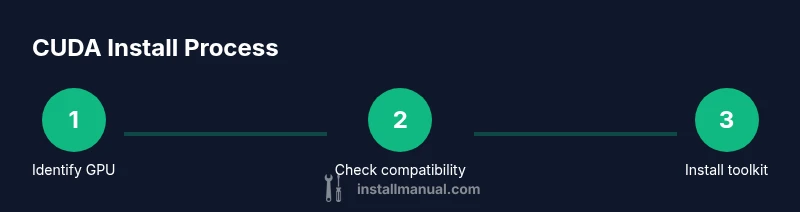

- 1

Identify your GPU model and compute capability

Open your system information to confirm the exact GPU model. Check the CUDA toolkit compatibility matrix to determine supported toolkit versions for your GPU. This step prevents selecting an incompatible combination that could fail at install or runtime.

Tip: Tip: Use a reliable online vendor database to verify model details before proceeding. - 2

Check supported CUDA versions for your GPU

Review the CUDA toolkit release notes for compute capability support and driver requirements. Matching the toolkit version to your GPU's capabilities helps avoid feature gaps and driver conflicts during development.

Tip: Tip: Prefer a widely-supported, long-term stable toolkit version for production workloads. - 3

Decide toolkit vs runtime based on needs

If you plan to develop CUDA code, select the Toolkit install. If you only need to run pre-built CUDA software, a Runtime install may be sufficient. Consider future projects when choosing.

Tip: Tip: For multi-project development, compartmentalize toolkits by project using environment managers. - 4

Download the correct GPU driver

Download the latest driver that supports your chosen toolkit version from NVIDIA’s official site. Do not skip driver verification, as an incompatible driver can block the entire installation.

Tip: Tip: If you have multiple GPUs, ensure the primary GPU is listed in Device Manager or lspci before installing. - 5

Prepare OS prerequisites

For Windows, close all running apps and disable antivirus during install if advised. For Linux, install required dependencies and disable Secure Boot if using a custom driver. macOS users should confirm CUDA support for their macOS version.

Tip: Tip: Run installation as administrator or root to avoid permission issues. - 6

Uninstall older conflicting NVIDIA software

If previous CUDA versions or drivers exist, consider a clean uninstall to avoid conflicts. Use the official cleanup utility if available and reboot.

Tip: Tip: Maintain a backup of your previous toolkit in case you need to rollback. - 7

Install the driver first

Install the NVIDIA driver and reboot to ensure the kernel module loads correctly. The driver must be active before installing the CUDA toolkit.

Tip: Tip: After reboot, verify GPU visibility with nvidia-smi. - 8

Install the CUDA toolkit with a matching version

Run the CUDA toolkit installer and choose a version compatible with your driver. On Linux, decide between a package manager or runfile based on your distro policy.

Tip: Tip: Do not install components you do not plan to use to reduce clutter. - 9

Set environment variables correctly

Add CUDA binaries to PATH and, on Linux, set LD_LIBRARY_PATH or on macOS DYLD_LIBRARY_PATH as needed. Update your shell profile to persist changes across sessions.

Tip: Tip: Use a project-specific activation script to avoid global PATH pollution. - 10

Verify the installation

Run nvcc --version to confirm the compiler is accessible. Use nvidia-smi to verify the driver and device status. Compile and run a simple CUDA sample to validate runtime readiness.

Tip: Tip: If nvcc isn’t found, recheck PATH and reinstall with the correct options. - 11

Install optional libraries for workloads

For ML workloads, install cuDNN and other libraries compatible with your CUDA version. Ensure the library versions match the toolkit and your framework requirements.

Tip: Tip: Use official installation guides for cuDNN to avoid API mismatches. - 12

Run a quick benchmark and finalize

Execute a small benchmark to confirm performance and stability. Document results and back up the configuration for future upgrades or migrations.

Tip: Tip: Schedule periodic driver/toolkit updates and re-verify after upgrades.

Got Questions?

Do I need to uninstall previous CUDA versions before installing a new one?

Not always. If the old version is compatible with the new driver, you can upgrade in place. If you encounter conflicts or framework mismatches, perform a clean uninstall and reinstall the desired versions. Always follow the official cleanup steps for your OS.

You usually don’t need to uninstall first, but if you see conflicts or your frameworks fail to load, consider a clean uninstall and reinstall the versions you plan to use.

Can I install CUDA without an NVIDIA GPU?

CUDA accelerates workloads on NVIDIA GPUs. Without an NVIDIA GPU, you cannot run CUDA-accelerated code. You can still install drivers or toolchains for development purposes, but there will be no GPU acceleration.

CUDA requires an NVIDIA GPU to run. You can install tools to prepare environments, but you won’t get GPU acceleration without a suitable GPU.

Which CUDA version should I install for TensorFlow or PyTorch?

Check the compatibility matrix for your chosen framework. Install a CUDA version that is supported by both the framework and cuDNN version you plan to use. If you work with multiple frameworks, select a version that is widely supported and document it.

Look up your framework’s compatibility and pick a CUDA version that matches cuDNN as well. Document your choice for future projects.

How do I verify CUDA is correctly installed?

Run nvcc --version to confirm the compiler, then nvidia-smi to verify the driver and GPU visibility. Compile and run a simple CUDA sample to ensure the runtime works as expected.

Check the nvcc version and GPU visibility with nvidia-smi, then test a small CUDA sample.

Is CUDA install different on Linux vs Windows?

Yes. Windows uses installers with a guided UI and often a driver-first sequence, while Linux may use package managers or runfiles and requires manual environment variable setup. macOS support has narrowed in recent years, so verify platform compatibility before proceeding.

Linux installations often rely on package managers, while Windows uses installers. macOS support can be limited, so check compatibility first.

Do I need admin rights to install CUDA?

Yes. Installing drivers and toolkit typically requires admin or root privileges. Ensure you have permission before starting the install and follow your OS's security guidelines.

Administrative privileges are usually required for CUDA installation. Make sure you have the right permissions before you begin.

Watch Video

Main Points

- Identify your GPU and OS compatibility first.

- Choose toolkit vs runtime based on development needs.

- Always match driver and toolkit versions.

- Validate post-install with nvcc and sample workloads.